Claude Opus 4.7 vs GPT-5.4: It's Not a Benchmark Question. It's a Shape Question.

- Published on

Every few months the benchmark posts come back. Which model scored higher on MMLU. Which one won the coding eval. Which one passed the bar exam.

And every time, the wrong teams use this to make architecture decisions.

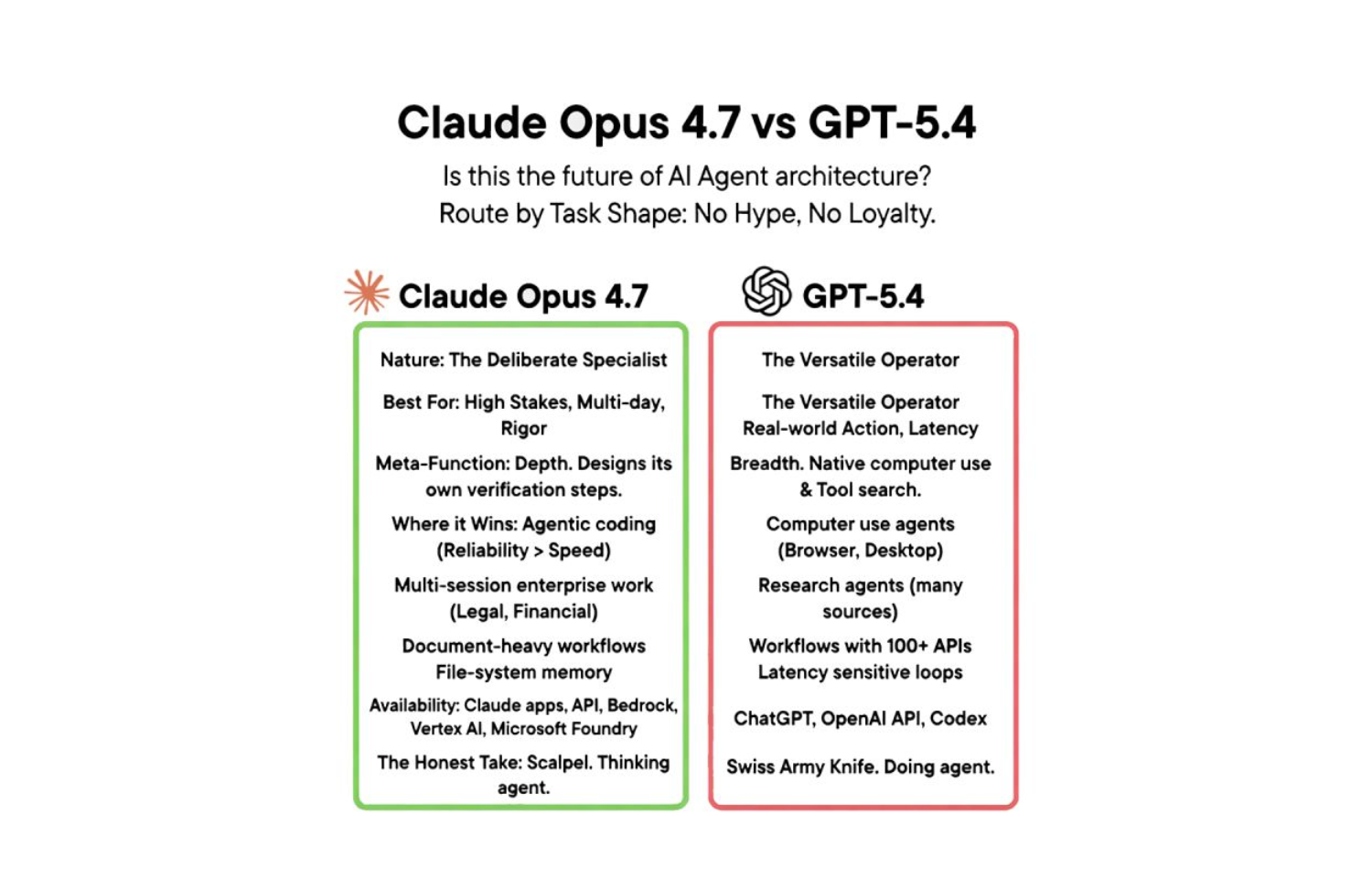

Here is the honest take: Claude Opus 4.7 and GPT-5.4 are not competitors in a race. They are instruments designed for different cuts. Picking between them by benchmark score is like choosing a scalpel over a multi-tool based on which one costs more. That is not the dimension that matters when you are in the operating room.

After real time with both models across production workloads, agentic coding pipelines, enterprise document processing, multi-system orchestration, and autonomous agents operating on live data, here is the framework that actually holds up.

The Wrong Question

The question most teams ask: Which model is smarter?

The question that produces better architecture: What shape is the work I am asking the agent to do?

Task shape has three dimensions that determine model fit:

- Horizon: Is this a single turn or a multi-step chain that compounds errors?

- Stakes: Is a wrong step recoverable in seconds, or does it corrupt downstream state?

- Surface: Does the work live inside a document and context window, or does it require acting on the external world?

Once you map a task to those three dimensions, the routing decision becomes obvious. It stops being a preference question and becomes an engineering decision.

Claude Opus 4.7: The Deliberate Specialist

Opus 4.7 was built for work that punishes shortcuts.

The distinguishing characteristic is not raw intelligence, it is rigor under uncertainty. Opus 4.7 does not just complete the task; it designs verification steps before declaring completion. It builds the output and then constructs a separate check to validate that output. That is not a chatbot behavior. That is a senior engineer double-checking their own work before pushing to production.

This shows up most clearly in agentic coding pipelines. A well-structured Opus 4.7 agent on a complex refactoring task does not just edit files and exit. It runs the test suite, reads the output, traces any failures back to the change set, and iterates until the verification step passes. It treats completion as a claim that needs to be proven, not an action that needs to be taken.

Where Opus 4.7 has a structural advantage:

Agentic coding where reliability beats speed. Multi-file refactors, dependency upgrades, codebase migrations. Tasks where one wrong assumption in step three causes silent failures in step twelve. Opus 4.7's self-verification loop catches those before the pipeline exits.

Long-horizon enterprise work. Legal document analysis, financial model review, research synthesis. Tasks that run across multiple sessions, accumulate context over time, and require the model to maintain coherent reasoning across tens of thousands of tokens without drift.

Document-heavy workflows. Opus 4.7's vision capabilities operate at high resolution across PDFs, charts, slide decks, and tracked-change documents. If the task requires reading a 200-page contract and identifying clauses that conflict with a standard template, this is the correct instrument.

File-system memory across multi-day projects. Opus 4.7 can be paired with file-based memory systems to maintain project context across sessions. The model architecture handles long context well enough that this pattern stays coherent even when resuming work after significant time gaps.

# Opus 4.7 self-verification pattern in an agentic loop

async def run_with_verification(agent, task):

result = await agent.execute(task)

# Model generates its own verification step

verification = await agent.verify(

task=task,

result=result,

instruction="Identify what could be wrong with this output before declaring it complete"

)

if verification.has_issues:

return await run_with_verification(agent, task.with_context(verification.issues))

return result

The loop above is not just a retry mechanism. The verification step uses the model to audit its own output against the original task requirements, a fundamentally different capability than simply re-running the task on failure.

Available on: Claude apps, API, Amazon Bedrock, Google Vertex AI, Microsoft Azure AI Foundry.

GPT-5.4: The Versatile Operator

GPT-5.4 was built for agents that act in the world.

Where Opus 4.7 excels at depth inside a context window, GPT-5.4's edge is breadth across surfaces. It is the first mainline OpenAI model with computer use trained natively into the base model, not bolted on as a capability module. On desktop control benchmarks, GPT-5.4 operates at roughly human level. Click targets, form fields, navigation flows, UI state changes, the model maps visual state to action reliably enough to handle production automation workflows.

It also ships with native tool search, which changes the economics of large-scale agentic orchestration. When your agent needs to operate across dozens of APIs and the tool list exceeds what fits cleanly in a single context, GPT-5.4's architecture handles the routing without manual tool selection engineering. This scales agent capability past the toy demo range into real production tool ecosystems.

Where GPT-5.4 has a structural advantage:

Computer use agents. Browser automation, desktop app control, UI testing that requires visual interpretation. If the task requires clicking through a legacy web interface that has no API, GPT-5.4 is the right model. Opus 4.7 can do computer use, but GPT-5.4's native training shows up in reliability on ambiguous visual states.

Research agents crawling multiple sources. Tasks that require visiting fifteen web pages, synthesizing contradictory information, and producing a structured report benefit from GPT-5.4's throughput efficiency. The model handles breadth-first information gathering without the cognitive overhead that slows high-rigor models on simpler tasks.

Workflows with 100+ API connectors. Enterprise integration layers, workflow automation, multi-system orchestration where the agent needs to coordinate across Salesforce, Jira, Slack, and five internal APIs in a single workflow. Tool search reduces the schema overhead that would otherwise consume context budget.

Latency-sensitive production loops. When your agent runs in a feedback loop where response time directly affects user experience, GPT-5.4's token efficiency is margin. Faster time-to-first-token with comparable quality on well-defined tasks makes it the better choice when speed is a product constraint, not just a preference.

// GPT-5.4 computer use agent pattern

const agent = new OpenAI.Agent({

model: "gpt-5.4",

tools: [

{ type: "computer_use" },

{ type: "tool_search", tool_registry: enterpriseToolRegistry }

]

});

// Navigate UI, fill forms, extract data, no API required

const result = await agent.run({

task: "Log into the legacy reporting portal, run the Q1 revenue report, and extract the regional breakdown table",

context: { screenshot: await captureScreen() }

});

Available on: ChatGPT, OpenAI API, Codex.

The Honest Comparison Nobody Posts

Reading the vendor pages, you would think this is Ford vs. Chevy. Two trucks. Pick the one with the better cup holders.

It is not. It is a scalpel versus a Swiss Army knife.

| Dimension | Claude Opus 4.7 | GPT-5.4 |

|---|---|---|

| Self-verification | Native to reasoning loop | Requires explicit prompting |

| Computer use | Capable | Native, human-level benchmark |

| Document processing | High-res vision, long context | Good, shorter horizon |

| Tool breadth | Strong via MCP | Native tool search at scale |

| Multi-session memory | File-based, high coherence | Session-scoped by default |

| Latency | Deliberate | Faster on defined tasks |

| Best single-word description | Rigorous | Versatile |

Neither column is a winner. They are descriptions of different instruments.

The teams that lose time, and money, are the ones that pick a model and use it for everything. The teams that win are the ones that look at a task, map it to its shape, and route accordingly.

The Decision Framework: Route by Task Shape

Before choosing a model, answer three questions:

1. What is the cost of a wrong step?

If a wrong step in the middle of the task corrupts downstream state, causes a bad write to production data, or creates an error that compounds invisibly, use Opus 4.7. Its self-verification loop catches the class of error that only shows up after the damage is done.

If a wrong step is immediately visible, easily corrected, or limited in blast radius, GPT-5.4's throughput advantage is worth the tradeoff.

2. Where does the work live?

If the task lives inside documents, codebases, and structured data, Opus 4.7 is the right instrument. It was designed to reason deeply over large context with high fidelity.

If the task requires acting on the external world, clicking, navigating, filling, extracting from live UIs, GPT-5.4's native computer use is the structural advantage.

3. How many tools does the agent need?

Under 20 tools: both models handle this without architecture changes.

20–100 tools: MCP with Opus 4.7 gives you governed access with clear tool schemas. GPT-5.4 with tool search handles the routing automatically.

100+ tools: GPT-5.4's tool search was designed for this range. Fighting it with a manually curated tool list adds engineering overhead with no quality return.

The Production Architecture: Use Both

The best production systems are not running one model for everything.

They are running two models in composition. One to think. One to do.

┌─────────────────────────────────────────────────┐

│ Task Orchestrator │

│ (routes by task shape) │

├─────────────────┬───────────────────────────────┤

│ Claude Opus 4.7│ GPT-5.4 │

│ │ │

│ Long-horizon │ Computer use │

│ reasoning │ Browser automation │

│ Code review │ High-volume API calls │

│ Doc analysis │ Multi-system orchestration │

│ Verification │ Latency-sensitive loops │

└─────────────────┴───────────────────────────────┘

A research pipeline that works in production: GPT-5.4 crawls the sources, handles the browser navigation, extracts the raw data. Opus 4.7 receives the extracted data, reasons over it, identifies inconsistencies, drafts the synthesis, and validates its own output before returning it. The output quality is higher than either model alone. The cost is lower than running Opus 4.7 on the crawl phase.

A deployment agent that works in production: Opus 4.7 reviews the diff, reasons about risk surface, and produces a structured deployment plan with verification steps. GPT-5.4 executes the plan, interacting with CI/CD dashboards, monitoring consoles, and deployment tooling through computer use. The reasoning stays in the high-rigor model. The execution stays in the high-throughput model.

class TaskRouter:

def route(self, task: Task) -> str:

if task.requires_computer_use or task.tool_count > 50:

return "gpt-5.4"

if task.horizon == "long" or task.stakes == "high" or task.requires_verification:

return "claude-opus-4-7"

# Default: match to cost/latency profile

return "claude-opus-4-7" if task.context_length > 50_000 else "gpt-5.4"

This is not a theoretical pattern. It is the architecture of every serious agentic system we have seen in production at enterprise scale.

What This Means for Your Stack

The model decision is not a product decision. It is a system design decision.

Start with the task graph, not the model spec. Map what your agent actually needs to do. Every step. Every tool call. Every decision point. Then annotate each step with horizon, stakes, and surface. The model routing falls out of that analysis.

Do not let vendor loyalty make the decision. The teams that over-index on Anthropic or OpenAI out of preference are paying an invisible tax, either in quality on tasks the wrong model handles poorly, or in latency and cost on tasks that could be handled by a faster model.

Plan for routing from day one. Adding a second model to an agent system that was designed for one is harder than designing for two from the start. Build the routing layer early. The abstraction pays off the first time task requirements change.

Measure what matters. Benchmarks measure average capability across standardized tasks. Your task is not standardized. Measure your specific success criteria, correctness rate, verification failure rate, latency at the p95, cost per successful task completion, and let those metrics drive model selection.

Conclusion

Claude Opus 4.7 is a deliberate specialist. It is the instrument you reach for when rigor matters more than throughput, when the task requires self-verification, long-horizon reasoning, or high-stakes document work where a wrong answer is worse than a slow one.

GPT-5.4 is a versatile operator. It is the instrument you reach for when breadth matters more than depth, when the agent needs to act across many surfaces, coordinate across hundreds of tools, or operate on live UIs that have no API.

The choice between them is not a measure of intelligence. It is a measure of fit.

Route by task shape. Not by loyalty. Not by benchmark. By the cut you are making.

At JMS Technologies Inc., we design production agentic systems across the full model stack, single-model pipelines built for specific task profiles and multi-model architectures that route intelligently across Claude, GPT, and specialized models based on task shape, cost constraints, and quality requirements.

Building a production agent that needs to get this right? Let's talk.