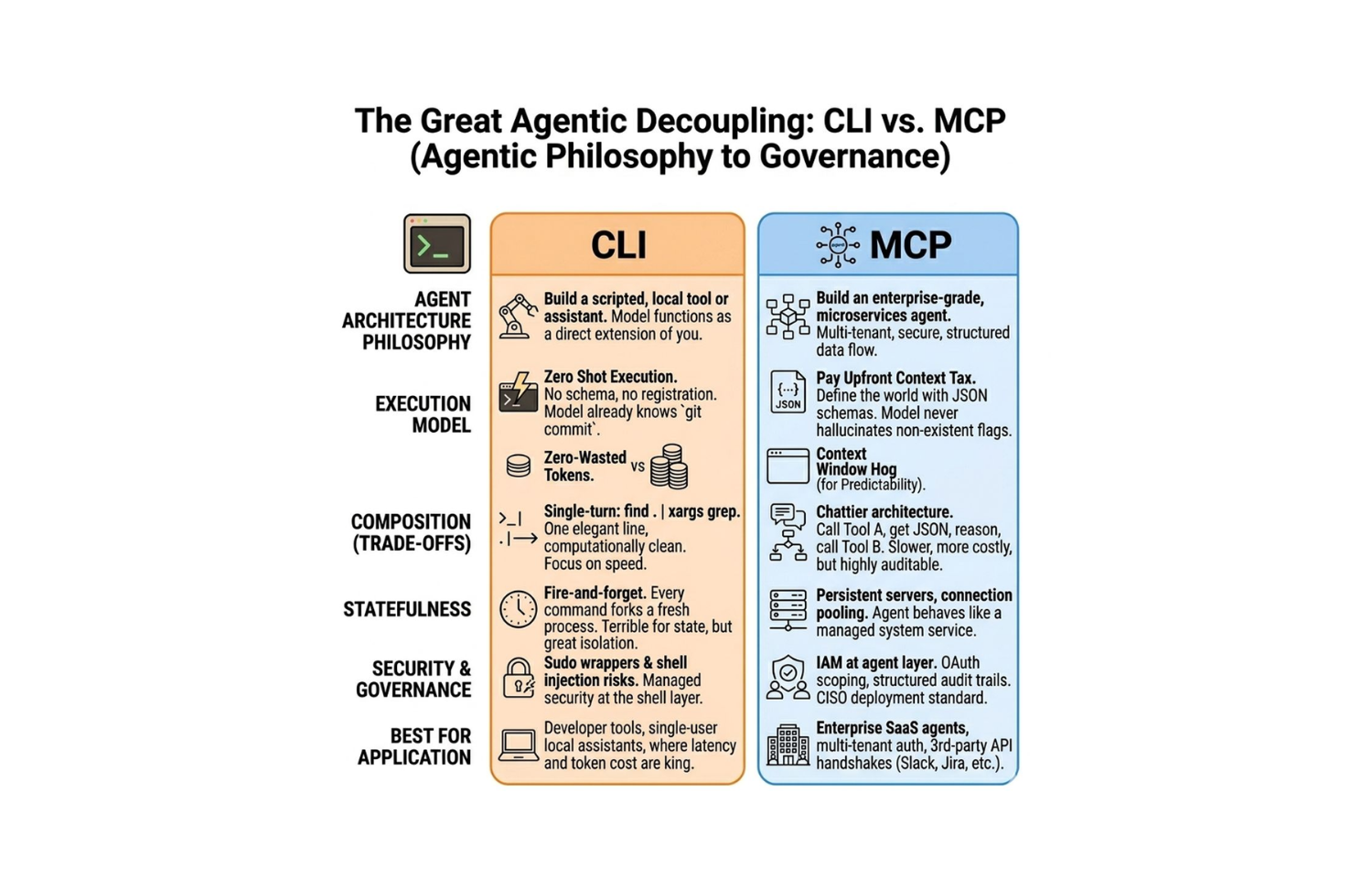

The Great Agentic Decoupling: CLI's Brute Force vs. MCP's Governance

- Published on

We've spent decades perfecting the CLI because it is the universal language of the engineer. Every system exposes it. Every developer speaks it. The Unix Philosophy, composable, orthogonal, text-streaming tools, remains one of the most elegant design frameworks ever shipped.

But elegant tools designed for humans hit a ceiling when handed to autonomous agents. The same properties that make CLI powerful for engineers, raw access, direct execution, minimal abstraction, become liabilities in systems operating without a human reviewing each command.

MCP, the Model Context Protocol introduced by Anthropic, is not a replacement for CLI. It is a different layer of the agentic stack, solving a fundamentally different class of problems. Understanding the tradeoffs between them is not academic, it determines whether the agents you build are developer tools or enterprise-grade autonomous systems.

1. The Problem That Created the Split

Before the distinction matters, it helps to understand why it exists at all.

First-generation AI agents were built on the simplest possible integration model: give the model access to a shell, let it execute commands, capture stdout, feed it back as context. This works at the prototype stage because it leverages everything the model already knows. LLMs trained on code understand git, grep, curl, docker, and thousands of other tools with no additional instruction.

The problem surfaces at production. Autonomous agents executing shell commands in multi-tenant environments, touching production data, or operating across organizational boundaries inherit every security, auditability, and reliability weakness of direct shell access, amplified by the fact that there is no human reviewing the command before it executes.

The question that splits CLI from MCP is precise: do you need execution speed and simplicity, or do you need governed access and auditability?

The answer determines the architecture.

2. Cold Start vs. Context Tax

The most immediate practical difference between CLI and MCP is initialization cost.

CLI gives you zero cold-start overhead. The model already knows what git commit, kubectl apply, and npm run build do. There is no schema to pass, no server to register, no capability manifest to load. The agent reasons about the available tooling from training knowledge alone and issues commands immediately. First-token-to-first-action latency is minimized.

# Zero schema overhead, model knows this natively

git log --oneline -20 | grep "feat:"

find . -name "*.test.ts" | xargs grep "TODO"

kubectl rollout status deployment/api-server

MCP requires upfront context investment. Every session begins by loading the capability manifests of the connected servers, structured JSON schemas describing what tools exist, what parameters they accept, what resources they expose. This context is consumed before any work begins.

{

"tools": [

{

"name": "github_create_pull_request",

"description": "Create a pull request in a repository",

"inputSchema": {

"type": "object",

"properties": {

"repository": { "type": "string" },

"title": { "type": "string" },

"body": { "type": "string" },

"base": { "type": "string" },

"head": { "type": "string" }

},

"required": ["repository", "title", "base", "head"]

}

}

]

}

This is not wasted overhead. It is the price of guaranteed correctness. The model cannot hallucinate a parameter that does not exist in the schema. It cannot invoke a tool action that was not explicitly declared. The context tax buys constraint, and constraint at the tool layer is how you prevent the class of errors that appear at 3am when the agent invented a --force-merge flag that maps to something unexpected in the underlying system.

For short-lived developer tools with single users, the tax is rarely worth paying. For enterprise agents touching production systems, it is non-negotiable.

3. Composition: Pipes vs. Orchestration

Unix pipes are one of the most powerful composition primitives ever designed. The elegance is in the constraints: every tool reads from stdin and writes to stdout, which means every tool composes with every other tool with no adapter required.

find . -name "*.log" \

| xargs grep -l "ERROR" \

| xargs -I{} awk '/ERROR/{count++} END{print FILENAME, count}' {} \

| sort -k2 -rn \

| head -10

This is a single logical operation. It is computationally efficient, executes in a single turn, and produces a result with minimal LLM involvement. For well-defined, predictable tasks, log analysis, file transformation, batch operations, CLI composition is nearly unbeatable.

MCP forces a chattier architecture. There are no pipes. There is an orchestration loop. The agent calls a tool, observes the result, reasons about the next step, calls another tool. Each step is an LLM inference call plus a tool invocation roundtrip.

Agent reasoning: "I need to find errors in recent logs, then create a ticket"

Step 1: list_log_files({ since: "24h", level: "error" })

→ { files: ["api.log", "worker.log"] }

Step 2: read_log_file({ file: "api.log", filter: "ERROR" })

→ { entries: [...] }

Step 3: analyze_patterns({ entries: [...] })

→ { summary: "...", affected_services: [...] }

Step 4: create_jira_ticket({

title: "...",

description: "...",

priority: "high",

labels: ["production", "error"]

})

→ { ticket_id: "INFRA-4291", url: "..." }

This is slower and more expensive than the equivalent shell pipeline. But it is infinitely more debuggable. Every step is a discrete, logged, auditable event. When something goes wrong, you have a full execution trace. You know exactly which tool was called, what arguments it received, and what it returned. Post-mortem analysis on a CLI pipeline that went wrong in an agent context is substantially harder.

The tradeoff maps cleanly to use case. CLI composition for speed-critical, well-defined tasks. MCP orchestration for complex, multi-system workflows where debuggability and auditability matter more than raw throughput.

4. Statefulness: Fire-and-Forget vs. Persistent Sessions

This is the architectural argument where MCP has the clearest systems-design advantage.

Every CLI invocation forks a fresh process. State does not persist between commands unless explicitly serialized and passed forward. For file operations and simple transformations, this is fine. For anything requiring session context, connection state, or transactional continuity, it becomes an engineering problem.

Consider an agent that needs to run a series of related database queries across a long-horizon task:

# CLI approach: reconnect on every call

psql $DATABASE_URL -c "BEGIN;"

psql $DATABASE_URL -c "SELECT * FROM orders WHERE status='pending';"

psql $DATABASE_URL -c "UPDATE orders SET status='processing'..."

psql $DATABASE_URL -c "COMMIT;"

Each command re-establishes a TCP connection, re-authenticates, and runs in a separate session. The BEGIN and COMMIT are in different database sessions, the transaction does not span them. The agent has to work around the fire-and-forget model at every step.

MCP servers are persistent processes. They maintain connection pools, session state, and context across the lifetime of an agent session. The same MCP server that handles the first tool call handles the tenth. A database MCP server maintains a connection pool and can execute transactional sequences as a single logical operation. A filesystem MCP server maintains working directory context. An API MCP server manages OAuth token refresh transparently.

MCP Database Server (persistent connection pool):

call: begin_transaction()

→ { transaction_id: "txn_001" }

call: query({ sql: "SELECT ...", transaction_id: "txn_001" })

→ { rows: [...] }

call: execute({ sql: "UPDATE ...", transaction_id: "txn_001" })

→ { affected: 12 }

call: commit_transaction({ transaction_id: "txn_001" })

→ { status: "committed" }

This is the behavior of a microservice, not a shell script. Long-running agents operating across minutes or hours, maintaining context across dozens of tool calls, require the statefulness model that MCP provides by design.

5. The Security Frontier

Security is where the CLI-in-production argument collapses for autonomous agents.

Direct shell execution by an AI agent is a significant attack surface. The risks are familiar from traditional software security but amplified by the non-determinism of LLM-generated commands:

Prompt injection through tool output. If an agent reads a file or API response that contains adversarial content, a malicious actor can embed instructions that cause the agent to execute unintended commands. The model processes the file content as context and may act on instructions embedded in it.

Shell injection through constructed arguments. When agents construct shell commands by interpolating variable content, injection vectors appear. The same class of bug that creates SQL injection in web applications creates command injection in agentic systems.

# If filename comes from untrusted input, this is a command injection vector

cat "$user_provided_filename"

# Example attack: filename = "report.pdf; rm -rf /tmp/*"

cat "report.pdf; rm -rf /tmp/*"

Scope creep through ambient authority. A CLI agent running as a user with broad system permissions has ambient access to everything that user can access. The principle of least privilege is structurally difficult to enforce at the shell layer without building privilege management infrastructure that approaches MCP's complexity anyway.

MCP addresses these at the protocol level. Tool definitions are declared schemas, not free-form command strings. The agent cannot construct arbitrary shell commands, it can only invoke declared tools with declared parameters. The server validates inputs against the schema before execution.

Authorization is first-class in MCP's design. Servers can implement OAuth scopes that limit which tools a given client can invoke. A read-only analytics agent gets read resource access. A deployment agent gets specific write tools for specific environments, nothing broader. Every tool invocation is logged against an authenticated client identity.

{

"authorization": {

"client_id": "agent-deploy-staging",

"scopes": [

"deploy:staging:read",

"deploy:staging:write",

"logs:staging:read"

],

"denied": [

"deploy:production:write",

"infra:delete"

]

}

}

This is IAM at the agent tool layer. It is what a CISO needs to see before signing off on autonomous agent deployment in a regulated environment. Shell wrappers, sudo policies, and capability restrictions are all valid engineering approaches, but they are retrofitting a governance model onto a tool that was not designed for it. MCP has governance built into the protocol.

6. Decision Framework: When to Use Each

The choice between CLI and MCP is not binary and it is not permanent. It is a function of deployment context.

Choose CLI when:

- You are building developer tooling, local assistants, or productivity automation

- The agent operates as an extension of a single authenticated user

- Execution latency and token cost are the primary constraints

- The task is well-bounded and the failure blast radius is limited to the local environment

- You need to compose tools that only expose CLI interfaces

Choose MCP when:

- The agent operates in a multi-tenant environment or touches data belonging to multiple users

- You need per-client authorization and scope enforcement

- Integration requires third-party API handshakes with OAuth flows (Slack, Jira, Salesforce, GitHub)

- Audit trails and structured logging are required for compliance

- The agent needs to take actions with production data or irreversible consequences

- You need persistent connections, connection pooling, or session state across tool calls

Use both when:

- MCP governs the session and enforces authorization

- CLI-based tool implementations execute the heavy lifting inside MCP tool handlers

- The MCP server wraps shell operations with input validation, scope checks, and structured logging before passing arguments to the underlying command

This is not a theoretical pattern. It is how well-architected agentic platforms actually work in production.

7. The Hierarchy in Practice

The most useful mental model is a layered stack, not a binary choice.

┌─────────────────────────────────────┐

│ Agent / LLM Layer │ ← Reasons, plans, selects tools

├─────────────────────────────────────┤

│ MCP Protocol Layer │ ← Governs access, scopes, audit

├─────────────────────────────────────┤

│ MCP Tool Implementations │ ← Can internally use CLI tools

├─────────────────────────────────────┤

│ CLI / System Layer │ ← Raw execution, file ops, processes

└─────────────────────────────────────┘

The MCP layer does not replace CLI execution, it wraps it in a governance model. A deployment tool inside an MCP server might internally call kubectl or terraform. A file analysis tool might internally use ripgrep or awk. The agent never sees the raw shell command. It sees a declared tool with validated inputs and a structured response.

This separation is what makes the architecture both powerful and auditable. The agent has the full expressiveness of CLI tooling without direct shell access. The MCP layer has the full observability of structured protocol communication without needing to reimplement every system operation from scratch.

# MCP Tool handler, governs the CLI execution internally

@mcp_tool("search_codebase")

async def search_codebase(pattern: str, path: str, file_type: str) -> ToolResult:

# Validate inputs against declared schema before touching the shell

if not is_safe_pattern(pattern):

raise ToolError("Pattern contains unsafe characters")

# Scope check, verify client has read access to this path

if not client.has_scope(f"codebase:read:{path}"):

raise AuthorizationError("Insufficient scope")

# Internal CLI execution, wrapped and controlled

result = await run_subprocess([

"rg", "--json", "-t", file_type, pattern, path

])

# Structured, logged response

await audit_log.record(client_id, "search_codebase", {"pattern": pattern, "path": path})

return ToolResult(matches=parse_ripgrep_json(result.stdout))

The CLI tool executes with the speed and power it was designed for. The MCP layer ensures it only executes with validated inputs, under the correct authorization, with a complete audit record.

8. What This Means for Enterprise Agent Architecture

The practical implication for teams building autonomous systems at scale:

Start with the trust model. Before writing any integration code, define where the agent sits in your trust hierarchy. A personal developer assistant lives at a different trust level than an agent that writes to a production database or sends communications on behalf of users. The trust model determines which layer of the stack is load-bearing.

Design MCP boundaries around authorization domains. MCP servers should align with your existing IAM boundaries. If you have separate permission models for staging and production environments, they should be separate MCP servers or separate scopes within a server. The granularity of your MCP authorization determines the granularity of agent permissions.

Treat CLI as an implementation detail, not a protocol. Shell commands are execution mechanisms. They are not an integration protocol between systems. When agents need to interact with systems, not just operate on local files and processes, design those interactions as structured tool definitions, even if the implementation uses CLI internally.

Build audit infrastructure before enabling autonomous action. Every MCP tool invocation that takes a consequential action, writes data, calls external APIs, modifies system state, should generate a structured audit record. This is not a post-launch compliance requirement. It is the foundational observability that lets you understand what your agents are doing and recover when something goes wrong.

Conclusion

The Unix Philosophy is not wrong. It is right for the problems it was designed to solve: composing discrete, well-defined operations in interactive developer workflows. It remains the right tool for local developer assistants and low-blast-radius automation.

But autonomous agents operating at enterprise scale, touching production data, acting on behalf of multiple users, and integrating across organizational boundaries are a fundamentally different class of system. They require a protocol designed for governance, not just execution.

MCP brings IAM to the agent layer. CLI provides the execution substrate that MCP tools can leverage internally. The architectures are not in competition, they are in composition.

The real question is not which protocol wins. It is whether you would trust your agent with your customer's data, unsupervised, at 3am. If the answer is not an immediate yes, the architecture needs another layer.

At JMS Technologies Inc., we design agentic systems across the full protocol stack, from developer tooling built on direct execution to enterprise-grade autonomous agents with governed MCP architectures, structured audit trails, and IAM-integrated authorization models.

Building an autonomous agent that needs to survive production? Let's talk.