AI Agent-Enabled Development: Why the SDLC Is Quietly Becoming the ADLC

- Published on

Software development is undergoing one of its biggest shifts since the introduction of graphical user interfaces.

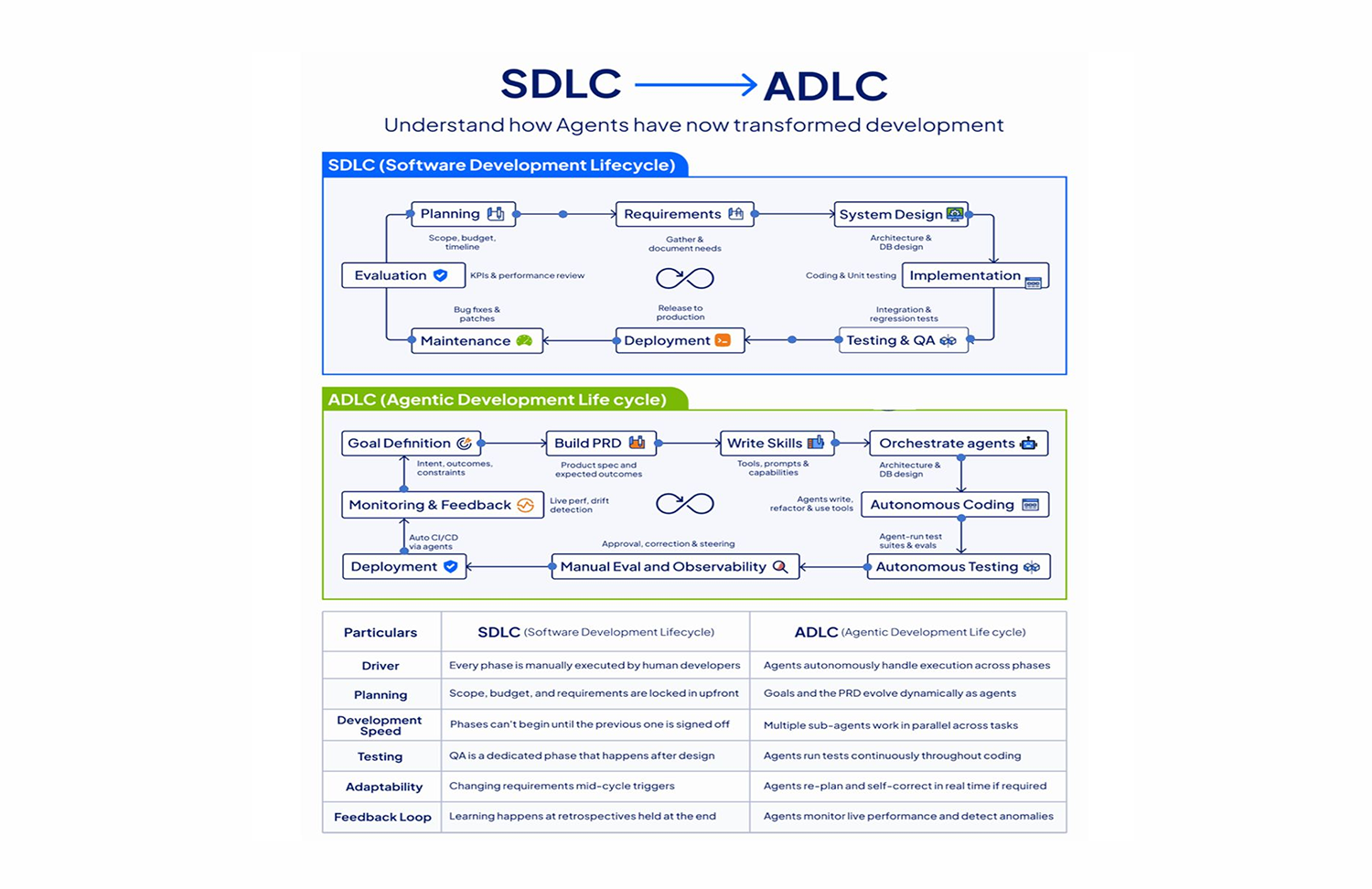

For decades, engineering teams operated inside a well-understood structure: the Software Development Lifecycle (SDLC). Requirements, design, implementation, testing, deployment, maintenance. Each phase handed off to the next. Primarily human. Largely sequential.

That model is not broken. But it is being quietly replaced.

The rise of AI agents is not simply automating individual tasks within the SDLC. It is restructuring the entire lifecycle. What is emerging looks less like software development with AI tools and more like a new model: the Agent-Driven Development Lifecycle (ADLC), where humans define intent and intelligent systems execute, verify, and adapt continuously.

1. What Changed: From Tools to Active Participants

The key distinction is not capability. It is agency.

Previous generations of developer tooling were reactive. A linter catches an error after you write it. An IDE suggests a completion as you type. A CI pipeline runs tests after you push. In all these cases, the human initiates, and the tool responds.

AI agents operate differently. They can:

- Hold context across a multi-file codebase

- Plan and execute multi-step tasks without step-by-step instruction

- Generate, test, and iterate on code in a continuous loop

- Detect failures, propose root causes, and apply fixes autonomously

- Operate in parallel across multiple workstreams simultaneously

This is not a faster linter. This is a system that participates in development the way a junior engineer does, except without context-switching costs, fatigue, or the need for a standup.

The 2026 Anthropic Agentic Coding Trends report documents this shift in production environments. Companies like Wiz, CRED, and Rakuten are running agent-assisted development workflows that have measurably compressed delivery cycles without reducing quality.

2. The Traditional SDLC: Built for a Different Constraint Set

The SDLC was designed for a world with specific constraints:

- Humans have limited bandwidth and attention

- Communication between phases is expensive

- Errors discovered late in the cycle are extremely costly to fix

- Coordination requires handoffs, documentation, and review gates

In this context, sequential phases made sense. You front-loaded requirements gathering to reduce rework. You formalized design to reduce implementation drift. You isolated testing to create a quality gate before release.

This model worked well when software systems changed slowly and teams were the primary throughput bottleneck.

In modern high-velocity environments, those constraints have shifted. The cost of communication has dropped with better tooling. Deployment frequency has increased with CI/CD. And now, with agents, the cost of iteration has dropped dramatically.

When iteration is cheap and fast, the sequential phase-gate model becomes a bottleneck rather than a safeguard.

3. The Agent-Driven Development Lifecycle (ADLC)

The ADLC does not discard the activities of the SDLC. It restructures how and when they happen.

In the traditional model, phases are sequential and owned by distinct roles. In the ADLC, execution becomes parallel and continuous.

Traditional SDLC:

Requirements → Design → Implementation → Testing → Deployment → Maintenance

Agent-Driven Lifecycle (ADLC):

Human: defines intent, architecture, constraints, and quality standards

Agents: generate, test, refactor, verify, deploy, monitor, continuously and in parallel

Feedback loop: telemetry and signals flow back into planning in real time

The human role does not disappear. It shifts upstream. Engineers move from executing each phase to designing the systems, constraints, and goals that agents operate within.

4. Continuous Testing: From Stage to Persistent Layer

In the SDLC, testing is a phase. It happens after implementation, typically before a release candidate. This creates a well-known problem: bugs discovered late are expensive to fix, and the feedback loop between writing code and validating it is measured in days or weeks.

In the ADLC, testing is a persistent layer, not a stage.

Agents generate unit tests, integration tests, and regression suites as code is being written. They run those tests continuously, flag failures immediately, and in some configurations propose fixes without waiting for a human to triage.

The implications are significant:

- Edge cases are caught during implementation, not after

- Regression coverage grows automatically as the codebase evolves

- QA shifts from reactive validation to continuous verification

- The cost of a bug drops dramatically because the detection-to-fix cycle compresses from days to minutes

This is not theoretical. Tools like Claude Code, GitHub Copilot Workspace, and Cursor are already operating in this paradigm in production engineering teams.

5. Dynamic Planning: Goals That Evolve

Traditional product requirements documents assume fixed goals. A PRD defines what should be built, and the team builds it. When goals change, the PRD is updated and the process restarts, which is expensive.

In agent-enabled workflows, planning becomes iterative rather than static.

Agents can consume telemetry, performance signals, error rates, and user behavior to surface insights that inform planning in real time. A feature performing below expectation does not wait for the next sprint retrospective. The signal is immediate. The planning response can be immediate as well.

This does not remove the need for human judgment in product decisions. It removes the latency between signal and response, giving engineering leadership faster feedback to make better decisions.

6. Parallel Execution Across the Codebase

One of the most structurally significant properties of multi-agent development is true parallelism across workstreams.

A single engineer context-switches between tasks. A team parallelizes work through specialization and coordination, but coordination itself has overhead. Stand-ups, PR reviews, merge conflicts, and dependency management all consume time.

Multiple specialized agents can operate simultaneously without most of these coordination costs:

- One agent generates the implementation for a new API endpoint

- Another validates it against existing integration tests

- A third scans for security vulnerabilities in the new code paths

- A fourth updates the documentation

In practice, this is what makes the ADLC faster than the SDLC even with the same team size. The throughput multiplier is not just about code generation speed. It is about eliminating the serialization bottleneck that human coordination introduces.

7. Real-Time Feedback Loops: Development and Operations Converge

In the SDLC, DevOps represented the effort to tighten the loop between development and production operations. CI/CD pipelines, monitoring dashboards, on-call rotations, and incident response processes all existed to close that feedback gap.

In the ADLC, that gap continues to shrink.

Production telemetry feeds directly into development context. Agents can detect anomalies in production, trace them to specific code paths, propose fixes, and open pull requests before a human engineer has finished reading the alert.

This does not mean autonomous production changes without human oversight. Most mature teams will (and should) maintain human approval gates for production deployments. But the work of diagnosis, root cause analysis, and solution proposal shifts from human to agent, freeing engineers to focus on judgment calls rather than investigation.

The line between development and operations does not disappear. It becomes a continuous feedback loop rather than a handoff between teams.

8. Role Transformation: The Engineer as Architect and Orchestrator

The most important implication of the ADLC is not speed. It is role transformation.

In the SDLC, the primary value of an engineer is execution: writing correct, maintainable, performant code. In the ADLC, execution shifts to agents. The primary value of an engineer becomes something different:

Defining the system that produces correct, maintainable, performant code.

This means:

- Setting architecture constraints that agents operate within

- Defining quality standards and testing requirements that drive continuous verification

- Designing the feedback loops between production signals and development priorities

- Making judgment calls on trade-offs that require business, user, and technical context simultaneously

- Reviewing agent-generated output with the pattern-recognition of years of engineering experience

This is not a deskilling of the engineering profession. It is an elevation. The engineers who thrive in ADLC environments are those who develop strong systems-thinking, architecture judgment, and the ability to reason about what agents should and should not be trusted to do autonomously.

The future engineer is an architect and orchestrator of intelligent systems, not a faster typist.

9. What Engineering Leaders Should Prepare For

The transition from SDLC to ADLC is not an event. It is a gradient, and most teams are already somewhere on it.

Practical steps for engineering leaders:

Audit your current feedback loop latency. How long does it take from writing code to seeing a test failure? From a production anomaly to a fix in review? The ADLC compresses each of these. Identify where your biggest latency concentrations are today.

Invest in agent infrastructure, not just agent tools. Individual coding assistants are the beginning. Durable competitive advantage comes from building internal agent workflows that encode your team's standards, architecture patterns, and quality requirements.

Redefine engineering productivity metrics. Lines of code and velocity points were already poor proxies for value. In an agent-driven world, they become meaningless. Measure outcomes: system reliability, cycle time from idea to production, defect escape rate, and architectural decision quality.

Develop agent oversight as an engineering discipline. Agents produce output at a rate humans cannot review line by line. Engineering teams need to develop systematic approaches to reviewing agent work: sampling strategies, automated validation layers, and escalation criteria for human review.

Retain deep technical expertise. Agents are only as good as the engineers who design the systems they operate within and review the output they produce. A team that loses deep technical capability in favor of pure agent orchestration will eventually lose the ability to evaluate whether agent output is correct.

Conclusion

The SDLC served software engineering well for decades. It encoded hard-won lessons about how to coordinate human teams, manage complexity, and deliver quality software under uncertainty.

The ADLC does not invalidate those lessons. It changes the medium through which they are applied.

Requirements still need to be understood deeply. Architecture decisions still require careful judgment. Quality standards still need to be defined and enforced. But the execution of those standards, the generation of implementations, the running of tests, and the monitoring of production systems, increasingly happens in continuous collaboration with intelligent agents rather than through sequential human effort.

This shift is underway. The teams that will lead the next decade of software delivery are the ones that understand it clearly and build toward it deliberately.

At JMS Technologies Inc., we design engineering systems for this new paradigm, helping teams architect agent-enabled development workflows that are fast, safe, and built to scale.

Ready to design your ADLC? Let's talk.